Perplexity Just Changed the Game for Financial AI Agents — And Most People Aren’t Talking About It

How one tool call is quietly rewriting the rules for how developers build finance-grade AI applications

There’s a particular kind of frustration that every developer building a financial AI product knows well. You get your language model working beautifully. The chat interface is clean. The prompts are dialled in. And then your first real user asks something completely reasonable — “What’s the current P/E ratio for NVIDIA?” or “Give me a breakdown of Apple’s last earnings call” — and suddenly everything falls apart.

Your model either hallucinates a number with alarming confidence, retrieves something stale from its training data that’s months out of date, or worse, just admits it doesn’t know. You scramble to integrate a financial data provider. Then you realise that provider requires a separate API key, a separate billing relationship, a separate set of documentation to understand, and hours of engineering time just to wire it up properly. And even then, the data comes back raw, unstructured, without any citations your users can actually verify.

This has been the state of financial AI development for years now. It’s painful, expensive, and strangely under-discussed.

That’s exactly what makes Perplexity’s announcement of Finance Search in the Agent API genuinely significant — not just as another product update, but as a potential inflection point in how financial intelligence gets built into AI products.

What Perplexity Actually Launched — And Why It’s Different

Let’s be precise about what was announced, because the nuance matters.

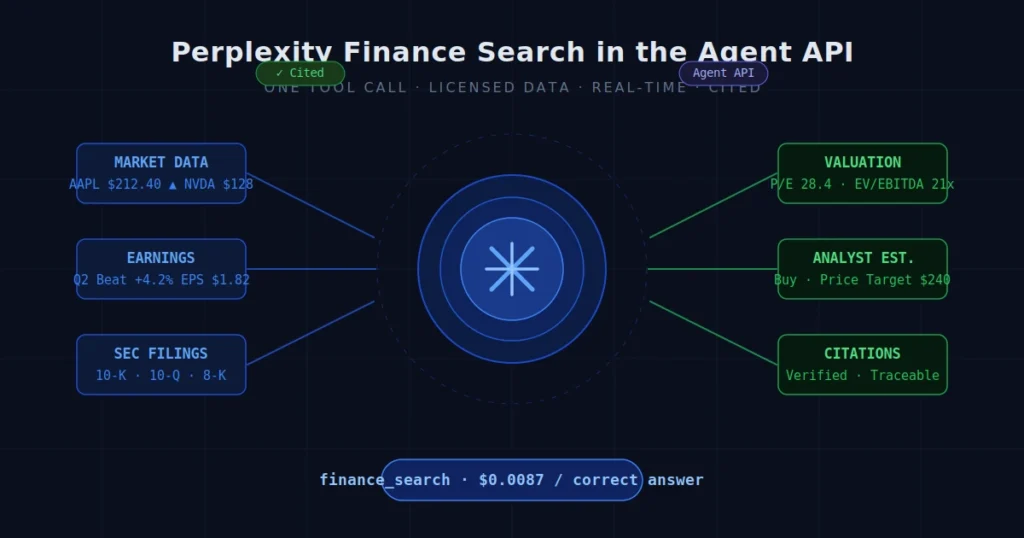

Perplexity has added a new tool type to its Agent API called finance_search. In a single tool call, developers can now retrieve licensed financial datasets, near-real-time market data, and web-sourced citations — all in one shot, all through one API, without managing any separate data provider integrations.

This isn’t a wrapper around Yahoo Finance. This isn’t a scraper disguised as a data product. Perplexity is pulling from licensed financial data sources and packaging it alongside their existing search infrastructure, which means every answer comes with traceable citations that financial agents can verify.

The scope of what finance_search actually covers is substantial. According to Perplexity’s documentation, it retrieves structured financial data for public companies and ETFs including real-time and historical quotes, annual and quarterly financial statements, key ratios, earnings transcripts and filings, beat/miss histories, analyst estimates, insider activity, market movers, ETF constituents, and index details. That’s not a niche slice of financial data — that’s almost everything a developer would need to build a reasonably sophisticated financial research or monitoring tool.

And for developers wondering about cost: the tool is billed at $5 per 1,000 invocations, separate from model token costs. For context, that’s well within the range where it becomes economical even for relatively high-usage applications, especially when you factor in what you’re not paying for in terms of separate data provider subscriptions.

The Benchmark Nobody Was Expecting

Here’s where the announcement gets genuinely interesting — and a little competitive.

Perplexity released performance data from FinSearchComp, which is described as the first open-source benchmark specifically designed for open-domain financial search. This isn’t a benchmark Perplexity invented to make themselves look good. FinSearchComp was developed by independent financial experts and includes 635 expert-crafted questions across three sub-tasks: Time Sensitive Data Fetching, Simple Historical Lookup, and Complex Historical Investigation.

What makes this benchmark particularly brutal is how badly AI systems generally perform on it. On straightforward time-sensitive data retrieval tasks, even the top models average below 60% accuracy. On simple historical lookups, accuracy drops below 40%. And on complex historical investigation tasks that require multi-step reasoning across multiple data points, performance collapses to below 20%. Without access to a search tool at all, models score essentially zero on time-sensitive questions.

The chart Perplexity shared tells a vivid story. On the “Cost Per Correct Answer” metric for the time-sensitive tier of FinSearchComp, the numbers break down roughly as follows:

- Claude Opus 4.7 with built-in web search: approximately $0.3878 per correct answer

- exa/answer: approximately $0.0556

- GPT 5.5 with built-in web search: approximately $0.0386

- Gemini 3.1 Pro with built-in web search: approximately $0.0333

- parallel/lite: approximately $0.0113

- Perplexity/Sonar with Finance Search tool: approximately $0.0087

That last number is striking. Perplexity’s Finance Search tool delivered the lowest cost per correct answer in the cohort — by a meaningful margin against its nearest competitor — while also achieving the highest accuracy for live financial data in the Time Sensitive tier.

The second chart they shared shows the FinSearchComp T1 score tracked over time, specifically in the window after market close (22:00 CEST). Perplexity/Sonar with Finance Search consistently holds the top line, maintaining its accuracy advantage over GPT 5.5, Gemini 3.1 Pro, Claude Opus, parallel/lite, and exa/answer throughout the measurement window.

These aren’t cherry-picked lab conditions. The time-sensitive tier is specifically designed to test real-world conditions where data freshness matters most — stock prices, exchange rates, commodity prices. It’s the kind of data that goes stale within minutes, not days.

The Deeper Problem This Solves

To appreciate why Finance Search matters, you have to understand what financial AI development has actually looked like until now.

The fundamental challenge isn’t getting a language model to sound smart about finance. Modern LLMs are remarkably good at financial reasoning when given clean, current data. The problem is the data pipeline itself — specifically, three compounding issues that have made financial AI notoriously difficult to build well.

First: Staleness. Language models have knowledge cutoffs. Their internal financial knowledge — earnings data, stock prices, analyst estimates, SEC filings — can be months or years old. For most applications this is fine. For financial applications it’s often a dealbreaker. A model that confidently quotes NVIDIA’s Q2 2024 earnings data when a user is asking about the current quarter isn’t just unhelpful — it can be actively misleading.

Second: Data provider fragmentation. If you want real-time market data, you integrate with one provider. If you want earnings transcripts, you need another. SEC filings might come from EDGAR directly, but parsing EDGAR is its own engineering project. Analyst estimates live behind expensive institutional databases. Building a comprehensive financial data layer means managing multiple vendor relationships, multiple authentication flows, multiple data formats, and multiple pricing structures — before you’ve written a single line of your actual application logic.

Third: The verifiability problem. Financial decisions carry real stakes. Users — whether retail investors, financial analysts, or compliance officers — cannot simply trust an AI’s answer at face value. They need to be able to trace every data point back to a source they can verify independently. This is what Perplexity means when they say every result includes citations. It’s not a minor quality-of-life feature. In financial applications, citations are infrastructure.

Finance Search, at its core, is an attempt to solve all three of these problems in a single tool call. Whether it fully succeeds depends on your specific use case, but the architectural approach is sound.

What Developers Can Actually Build With This

Let’s get concrete. The tweet thread from Perplexity specifically mentions three categories of agent tasks that Finance Search enables without separate data provider integrations: valuation lookups, earnings recaps, and market monitors.

Valuation lookups are the workhorse use case. “What’s NVIDIA trading at right now, and what is its current P/E?” is actually the example Perplexity provides in their Agent API documentation for Finance Search. Before this tool existed, answering that question in an agent context required either accepting stale training data, integrating a market data API separately, or cobbling together web search results that may or may not have been accurate. Now it’s a single tool call that returns structured, licensed data with citations.

Earnings recaps are where the transcript and filing access becomes valuable. Earnings calls are dense, jargon-heavy, and time-consuming to process manually. An agent that can pull an earnings transcript, identify the guidance discussion, surface the beat/miss history for the last several quarters, and cross-reference analyst estimates — all in real time — is genuinely useful to a wide range of users, from individual investors doing research to analysts building client reports.

Market monitors are perhaps the most interesting category for developers thinking about ambient agents and background workflows. The ability to track market movers, insider activity, and sector-level data on an ongoing basis — without standing up a separate data infrastructure — makes it practical to build lightweight monitoring agents that would have required significant engineering overhead before.

Beyond those three categories, the Finance Search tool’s coverage of ETF data and index details opens up portfolio analysis use cases. The segment and KPI data access enables the kind of competitive intelligence applications that financial professionals currently pay significant money for through Bloomberg terminals or PitchBook subscriptions.

The Context: Perplexity’s Broader Agentic Pivot

This launch doesn’t exist in isolation. It’s part of a larger strategic shift at Perplexity that’s been accelerating through 2025 and into 2026.

The company has been building out what it calls the Agent API as a full-stack, model-agnostic platform — one that aspires to replace the model provider, search layer, and embeddings infrastructure that developers currently juggle from separate vendors. The pitch is simple: one API key, one billing relationship, access to frontier models from OpenAI, Anthropic, Google, and xAI, plus Perplexity’s own search and retrieval infrastructure.

Finance Search fits squarely into this strategy. Financial data is one of the most valuable, most complicated, and most fragmented data categories in software development. If Perplexity can be the layer that abstracts that complexity — and do it cost-effectively enough to beat competitors on the FinSearchComp benchmark — it creates a compelling reason for developers building finance-adjacent applications to consolidate around their platform.

This is also consistent with Perplexity’s expansion into consumer finance features. Users in the US and Canada can now connect brokerage accounts to Perplexity via Plaid, enabling portfolio-level analysis directly in the Perplexity interface. The company has integrated Polymarket prediction data for market-relevant questions, and added premium data from CB Insights, PitchBook, and Statista for enterprise users. Finance Search for the Agent API is the developer-facing expression of the same underlying investment in financial data infrastructure.

The company’s scale is worth noting here. Perplexity’s APIs power Bixby and Samsung Internet on Samsung Galaxy devices, and the company recently entered a three-year, $750 million commitment with Microsoft Azure to support its infrastructure needs. With a valuation that has grown to approximately $21 billion following its Series E-6 funding round, and annual recurring revenue that reportedly grew from around $80 million in late 2024 to approximately $200 million by early 2026, this isn’t a small company making incremental product updates. It’s a company that has the resources to invest seriously in data licensing relationships and API infrastructure.

The Citation Question: Why It Matters More Than You Think

One line in Perplexity’s announcement stands out above most others: “Every result includes citations, so financial agents can be current, accurate, and easy to verify.”

This deserves more than a passing mention, because it speaks to a genuinely hard problem in financial AI that doesn’t get enough attention: the accountability gap.

When a financial agent makes a claim — “Apple beat earnings estimates by 4% in Q2” — the question of where that information came from is not merely academic. In regulated environments, financial advisors and institutional investors are often legally required to document the sources underpinning their analysis. In consumer applications, trust is built or destroyed by whether users can click through to verify what they’re being told. In enterprise applications, procurement teams evaluating AI tools for finance teams often have specific requirements around data provenance and auditability.

The hallucination problem in AI is well understood at this point. What’s less often discussed is that even when a model gets the right answer, the inability to verify that it’s right creates a separate problem. A financial agent that gives you the correct P/E ratio but can’t point you to where it got that number is still less useful than one that can.

Perplexity’s citation infrastructure — built from their core search product — is one of their most defensible assets. Every result traces back to an identifiable source. In financial contexts, that often means licensed data providers, regulatory filings, or official company disclosures. That traceability is not a luxury feature. For many financial applications, it’s a prerequisite.

What Finance Search Doesn’t Cover — And Why That’s Okay

It’s worth being honest about the limitations here, because no product announcement should be taken at pure face value.

Finance Search in the Agent API is focused on public companies and ETFs. If you’re building applications around private company data, alternative assets, derivatives pricing, or fixed income in depth, you’re going to need supplementary data sources. The tool is designed for the broad middle of financial data use cases, not for specialist applications at the edges.

The real-time data is described as “near-real-time” in Perplexity’s own documentation — not tick-level data. For applications that require millisecond-level price accuracy, this tool is not the right solution. For applications that need data that’s accurate within a few minutes during market hours, it should be more than sufficient.

The tool is also currently geared toward English-language markets and common global exchanges. Perplexity’s FinSearchComp benchmark includes a Greater China subset, which suggests they’re thinking about international coverage, but developers building for highly localised markets should verify coverage for their specific needs.

None of these limitations are dealbreakers for the vast majority of financial AI applications. They’re just honest context for making an informed decision about whether Finance Search fits your specific use case.

A Broader Shift in the Developer Landscape

Zooming out, the Finance Search launch reflects something larger happening in the AI developer ecosystem: the gradual consolidation of what used to require multiple integrations into unified, opinionated APIs.

A few years ago, building a sophisticated AI application meant assembling a stack from scratch: an LLM provider, a vector database, a retrieval layer, domain-specific data providers, and a search infrastructure — all stitched together with custom code and maintained as separate relationships. That’s still a viable approach, and for some applications it’s the right one. But the overhead is substantial, and it scales poorly.

The alternative model — exemplified by what Perplexity is building with the Agent API — is a platform that handles more of that stack internally, exposing clean tool interfaces that abstract the underlying complexity. Finance Search is a specific expression of this philosophy applied to one of the most valuable and complex data domains.

If Perplexity can make the same argument stick for medical data, legal data, or scientific literature — each of which faces analogous problems of staleness, fragmentation, and verifiability — the Agent API starts to look less like a search tool and more like a general-purpose infrastructure layer for knowledge-intensive AI applications.

That’s a big vision, and Perplexity is clearly pursuing it with intention. Whether they execute well enough to win that market against OpenAI, Anthropic, Google, and others who are building similar infrastructure is an open question. But the Finance Search launch suggests they’re at least thinking about the right problems.

For Developers: Getting Started

If you want to actually try Finance Search in your own applications, the entry point is Perplexity’s Agent API. The tool is available via the finance_search tool type in API requests to https://api.perplexity.ai/v1/agent.

The code example Perplexity provides in their documentation is straightforward:

from perplexity import Perplexity

client = Perplexity()

response = client.responses.create(

model="perplexity/sonar",

input="What's NVIDIA trading at right now, and what is its current P/E?",

tools=[{"type": "finance_search"}],

)

print(response.output_text)

The finance_search tool is billed at $5 per 1,000 invocations, separately from model token costs. For comparison, a typical Pro search in Perplexity’s search models costs $5 per 1,000 requests, so the pricing is in the same neighbourhood as their existing tools.

The Python SDK is installable via pip install perplexityai, and the Agent API endpoint is available for immediate use with an API key from Perplexity’s developer platform.

The Bottom Line

Here’s the honest take: Perplexity Finance Search in the Agent API is a genuinely useful product for a genuinely under-served part of the developer market. Building financial AI agents has been harder than it should be, primarily because of data access complexity and the fragmented vendor landscape. This launch meaningfully reduces that friction.

The benchmark performance is real and impressive. The lowest cost per correct answer in the FinSearchComp T1 cohort, with the highest accuracy for live financial data — that’s not a marginal win. That’s a meaningful lead on a test designed to be hard.

The citation architecture matters more than the press release makes it sound. In financial applications, verifiability isn’t a nice-to-have. It’s often the difference between a product that can be deployed in a regulated context and one that can’t.

And the pricing — $5 per 1,000 Finance Search invocations — is competitive enough that it doesn’t obviously price developers out of building applications on top of it.

What Perplexity is building here is not just a new tool. It’s a case study in how platform thinking can create genuine developer leverage in domains that have historically been painful to work with. If they can make Finance Search work well enough that developers stop thinking about data layer complexity and start thinking about what they can build on top of it, they’ll have created something valuable.

The question is whether the execution matches the promise. Based on the benchmark data, there are real reasons to be optimistic.

Have you tried building with Perplexity’s Finance Search tool? We’d love to hear what you’re working on — and whether the real-world performance matches the benchmark numbers.

Read more tech updates techdg.in