Table of Contents

The Latest AI Updates April 2026, AI Revolution: From Chatbots to Autonomous Agents

Published: April 2026

Reading Time: 12 minutes

Executive Summary

If you’ve been following artificial intelligence over the past few years, buckle up—because April 2026 has changed everything. We’ve officially moved beyond the era of simple chatbots that could hold a conversation but not much else. What we’re witnessing now is the dawn of the “Agentic Era,” where AI doesn’t just talk—it acts.

This comprehensive guide breaks down the latest AI updates 2026 has delivered, from OpenAI’s game-changing GPT-5 release to Google’s Deep Research agent that can condense hours of work into minutes. We’ll explore how Meta’s Llama 4 is democratizing access to powerful AI, why Anthropic’s Claude 4 is becoming the go-to for professionals in law and medicine, and most importantly, what the rise of autonomous AI agents means for your daily life and work.

Whether you’re a developer building the next big app, a business owner looking to streamline operations, or simply someone trying to stay informed, this guide will give you the insights you need to navigate the AI landscape of 2026.

Introduction: The State of Intelligence in April 2026

Remember 2023? That feels like ancient history now. Back then, we were marveling at chatbots that could write decent emails and generate somewhat coherent images. The hype cycle was intense, with every week bringing a new “revolutionary” announcement. But let’s be honest—much of it felt like novelty without real substance.

Fast forward to April 2026, and the conversation has shifted dramatically. We’re no longer asking “Can AI write a poem?” or “Will it pass the Turing test?” Instead, we’re asking “Can AI handle my entire research project?” or “Can it manage my travel bookings while I focus on what matters?”

The answer, increasingly, is yes.

What we’re seeing in 2026 is what industry analysts are calling “stability through utility.” The flashy demos have given way to reliable, production-ready tools that deliver tangible value. AI has matured from a conversational novelty into a genuine collaborator—one that doesn’t just respond to your prompts but anticipates your needs and executes complex workflows with minimal supervision.

This shift matters for everyone. For professionals, it means reclaiming hours previously lost to repetitive tasks. For developers, it means access to more powerful, efficient tools. For everyday users, it means technology that finally feels intuitive rather than frustrating.

In this guide, we’ll walk through the major players, their latest releases, and what these 2026 AI trends mean for you. Let’s dive in.

OpenAI’s GPT-5: The New Benchmark

The Arrival of Native Multi-Modality

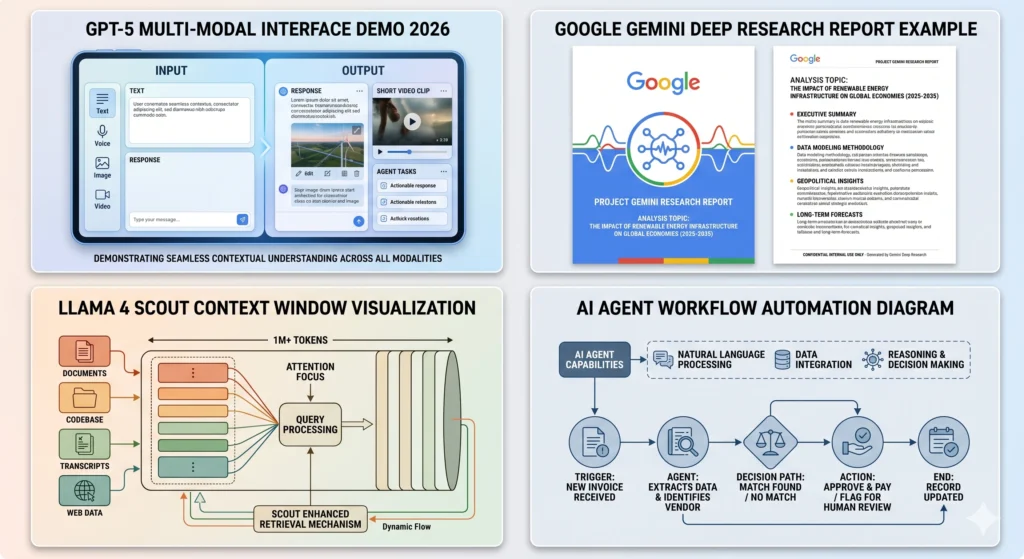

When OpenAI officially launched GPT-5 in early 2026, it wasn’t just another incremental update—it was a fundamental reimagining of what a language model could be. The standout feature? True native multi-modality. Unlike previous versions that bolted on vision or voice capabilities as separate modules, GPT-5 processes text, images, audio, and video through a unified architecture.

What does this mean in practice? Imagine showing GPT-5 a photo of your kitchen and asking, “What’s missing for a dinner party?” The model doesn’t just identify objects—it reasons about spatial relationships, understands social context, and provides actionable suggestions. There’s no lag, no switching between modes, no “I can’t see that.” It just works.

This seamless integration is powered by what OpenAI calls “System 2 thinking”—a deliberate reasoning mode where the model pauses to work through complex problems before responding. It’s the difference between a quick gut reaction and careful analysis, and for tasks requiring precision, it’s a game-changer.

GPT-5.3 Instant Mini: Speed Meets Intelligence

Not every application needs the full power of GPT-5, and OpenAI knows it. Enter GPT-5.3 Instant Mini, a lightweight variant designed for developers who need lightning-fast responses without breaking the bank.

Despite its smaller size, Instant Mini punches well above its weight. It handles routine queries, customer support interactions, and real-time applications with impressive competence. For startups and enterprises alike, this means you can integrate high-quality AI into your products without the computational overhead.

Early benchmarks show Instant Mini achieving 90% of GPT-5’s performance on common tasks while operating at 5x the speed and a fraction of the cost. For API developers, this is the sweet spot they’ve been waiting for.

ChatGPT Images 2.0: Thinking Before Creating

Image generation has come a long way since the days of wonky hands and surreal distortions. ChatGPT Images 2.0 introduces a revolutionary “Thinking Phase” that mimics how human artists approach their work.

Before generating a single pixel, the AI now creates a compositional blueprint—planning lighting, perspective, color harmony, and subject placement. It iterates on this plan internally, refining details before committing to the final render. The result? Images that are not just technically impressive but artistically coherent.

Users report that Images 2.0 understands nuanced prompts like “a moody noir scene with dramatic chiaroscuro lighting” far better than previous versions. It’s not just following instructions; it’s interpreting creative intent.

Google Gemini: Deep Research & Personalized Ecosystems

The Deep Research Agent

If there’s one Gemini Deep Research feature that’s making waves in 2026, it’s this: what used to take a research assistant 10 hours now takes Gemini 10 minutes.

Google’s Deep Research Agent isn’t just a smarter search engine. It’s an autonomous research assistant that can dive into academic papers, market reports, news archives, and technical documentation, then synthesize findings into a comprehensive report with proper citations.

Here’s a real-world example: A marketing director needs to understand the competitive landscape for sustainable packaging in the Southeast Asian market. In the past, this meant days of Googling, downloading PDFs, cross-referencing sources, and compiling notes. With Deep Research, she types a single prompt and receives a 15-page report within minutes—complete with charts, source links, and actionable insights.

What’s particularly impressive is Gemini’s commitment to accuracy. Google has implemented rigorous verification protocols that virtually eliminate the “hallucinations” that plagued earlier AI models. Every claim is backed by a citable source, and the system flags any information it can’t verify with high confidence.

Personalization via Nano Banana 2

Remember when AI-generated images felt generic and impersonal? Nano Banana 2 (officially Gemini 3 Flash Image) is changing that narrative.

This latest image generation model can tap into your Google Photos library—with your permission, of course—to create personalized images featuring you, your family, or your pets in virtually any scenario you can describe. Want to see your dog as a Renaissance painting? Your kids as astronauts on Mars? Nano Banana 2 makes it happen while keeping your data secure.

The privacy implications are significant. Google emphasizes that this personalization happens through on-device processing or encrypted channels, meaning your photos aren’t being used to train public models. It’s a win-win: hyper-personalized AI without compromising privacy.

Gemini 3.1 Flash: Speed as a Feature

Underpinning all of this is Gemini 3.1 Flash, Google’s latest architecture optimized for speed and efficiency. In a world where milliseconds matter, Flash delivers responses up to 40% faster than its predecessor while maintaining—or even improving—accuracy.

For businesses running AI-powered customer service or real-time analytics, this speed boost translates directly into better user experiences and lower operational costs.

Meta & The Open Source Renaissance: Llama 4

Llama 4 Scout: The Context King

While OpenAI and Google battle for supremacy in the commercial space, Meta is quietly revolutionizing AI through open source. Llama 4 Scout is the crown jewel of this effort, and its headline feature is nothing short of staggering: a 10-million token context window.

Let’s put that in perspective. Previous models could handle maybe 100,000 to 200,000 tokens at once—enough for a long document or a detailed conversation. Llama 4 Scout can process the equivalent of entire libraries. We’re talking about ingesting hundreds of books, years of company emails, or massive codebases in a single prompt.

For legal firms, this means analyzing decades of case law instantly. For researchers, it means cross-referencing entire scientific corpora. For developers, it means understanding a legacy codebase without manually piecing together documentation.

The business implications are profound. Companies can now ask questions like “What patterns do you see in our customer support tickets from the last five years?” and get comprehensive, data-driven answers without writing a single line of SQL.

Llama 4 Maverick: The Coder’s Edge

If Scout is the generalist, Llama 4 Maverick is the specialist—and it’s specializing in code. Benchmarks from independent testing labs show Maverick outperforming even proprietary models in Python, C++, and Rust development tasks.

What sets Maverick apart isn’t just raw coding ability; it’s understanding context. It can look at your entire project structure, understand architectural decisions, and suggest improvements that align with your existing patterns. It’s like having a senior engineer reviewing your code 24/7.

For small businesses and solo developers, this is democratizing access to expertise that was previously available only to well-funded tech companies. You don’t need a team of 50 engineers anymore—you need Llama 4 Maverick and a clear vision.

The Open Source Advantage

Meta’s commitment to open source means these models aren’t locked behind expensive APIs or usage limits. Developers can download, modify, and deploy Llama 4 models on their own infrastructure, giving them full control over data, costs, and customization.

This is fueling a renaissance of AI innovation outside the big tech bubble, with startups and independent developers building specialized tools that serve niche markets the giants overlook.

Anthropic Claude 4: The Ethics & Logic Leader

Professional Certification Breakthrough

When Claude 4 passed the Bar Exam and Medical Board certifications with scores in the top 1% of human test-takers, it wasn’t just a marketing stunt—it was a statement of capability.

Anthropic has positioned Claude 4 as the AI for high-stakes professional work, and the results back this up. In legal reasoning tasks, Claude 4 demonstrates an ability to parse complex statutes, identify relevant precedents, and construct logical arguments that rival junior associates at top firms. In medical contexts, it can analyze patient histories, cross-reference symptoms with current research, and suggest differential diagnoses with remarkable accuracy.

Importantly, Anthropic emphasizes that Claude 4 is designed to augment professionals, not replace them. The model is trained to flag uncertainty, request clarification, and defer to human judgment in ambiguous situations.

Advanced Reasoning and Self-Correction

Where Claude 4 truly shines is in its “chain-of-thought” reasoning. Unlike models that jump straight to answers, Claude 4 works through problems step-by-step, making its logic transparent and auditable. This is crucial for fields like law and science, where understanding how you reached a conclusion is as important as the conclusion itself.

Even more impressive is Claude 4’s self-correction mechanism. Before presenting a response, the model reviews its own reasoning, identifies potential gaps or errors, and refines its answer. It’s the AI equivalent of proofreading your essay before submission.

Constitutional AI in 2026

Anthropic’s “Constitutional AI” framework has evolved significantly since its introduction. In 2026, it represents the gold standard for AI safety without sacrificing capability. Claude 4 operates within ethical guardrails that prevent harmful outputs while maintaining the flexibility to tackle complex, nuanced topics.

For enterprises concerned about AI risk, this makes Claude 4 an attractive option for sensitive applications in healthcare, finance, and legal services.

The Paradigm Shift: Understanding AI Agents

From Generative to Agentic AI

Here’s the biggest story of 2026: AI agents are no longer science fiction. They’re here, and they’re transforming how we work.

The distinction is important. Generative AI creates content—text, images, code. Agentic AI takes action. It can open applications, navigate websites, fill out forms, send emails, and coordinate across multiple platforms—all with minimal human oversight.

Think of it this way: Generative AI is like a brilliant consultant who gives you advice. Agentic AI is like an executive assistant who actually implements that advice.

Real-World Use Cases

Travel Management: Instead of spending an hour comparing flights, hotels, and rental cars, you tell your AI agent, “Book me a trip to San Francisco for the first week of May, budget $2,000, prefer direct flights and boutique hotels.” The agent researches options, makes bookings, adds everything to your calendar, and sends confirmation emails. If your flight gets cancelled, it automatically rebooks you and notifies affected parties.

Financial Planning: AI agents can monitor your investment portfolio, execute trades based on predefined strategies, rebalance assets, and generate tax reports. They don’t replace financial advisors, but they handle the tedious execution work, freeing advisors to focus on strategy and client relationships.

Workflow Automation: Imagine an agent that attends your virtual meetings, transcribes discussions, extracts action items, updates your project management tool (like Trello or Asana), and sends follow-up emails to stakeholders. This isn’t a distant future—it’s available today in 2026.

The Supervision Spectrum

Not all agents are fully autonomous. Most operate on a spectrum from “human-in-the-loop” (requiring approval for each action) to “supervised autonomy” (handling routine tasks independently but escalating exceptions) to “full autonomy” (operating independently within defined parameters).

The key is choosing the right level for your use case. Critical decisions still benefit from human oversight, but routine tasks? Let the agents handle them.

On-Device AI & Privacy: The Offline Revolution

The Hardware Behind the Magic

One of the most significant 2026 AI trends isn’t about models at all—it’s about hardware. High-end smartphones, laptops, and tablets now ship with dedicated Neural Processing Units (NPUs) designed specifically for AI workloads.

These chips enable on-device AI, meaning models like Gemini Nano or optimized versions of Llama 4 can run entirely on your device without sending data to the cloud. The implications are huge.

Privacy and Security First

For industries handling sensitive information—healthcare, legal, finance, government—cloud-based AI has always posed a compliance challenge. On-device AI solves this by keeping data local. Your confidential documents never leave your machine. Your personal photos aren’t uploaded to servers. Your proprietary code stays proprietary.

This is why we’re seeing rapid adoption of on-device AI in enterprise environments. Companies can leverage powerful AI capabilities without violating data governance policies or exposing themselves to security risks.

Performance Benefits

Beyond privacy, on-device AI offers performance advantages. No internet connection? No problem. Your AI assistant still works. Need instant responses without network latency? On-device processing delivers.

Battery life has also improved dramatically. Modern NPUs are incredibly efficient, running AI tasks with minimal power consumption. You get intelligence without draining your battery.

Conclusion: Preparing for the Future

As we navigate April 2026, one thing is clear: artificial intelligence is no longer a futuristic concept or a novelty tool. It’s an integral part of how we work, create, and solve problems.

The latest AI updates 2026 has brought us—from GPT-5’s multi-modal reasoning to Claude 4’s professional expertise, from Llama 4’s open-source power to the rise of autonomous agents—represent more than technological progress. They represent a fundamental shift in what’s possible.

But here’s the truth: the AI models themselves aren’t the competitive advantage. How you use them is.

The professionals, businesses, and creators who thrive in this new landscape won’t be those with access to the most powerful models (though that helps). They’ll be the ones who adapt quickly, experiment boldly, and integrate AI thoughtfully into their workflows.

AI in 2026 isn’t here to replace human thought—it’s here to amplify human intent. It’s the difference between working harder and working smarter. Between being overwhelmed by information and being empowered by insight.

The question isn’t whether AI will transform your industry. It’s whether you’ll be leading that transformation or playing catch-up.

The future is agentic. The future is now. What will you build with it?

About the Author:

This guide was crafted to help professionals navigate the rapidly evolving AI landscape of 2026. Stay curious, stay informed, and keep building.

Last Updated: April 2026