Table of Contents

May 2026 AI Updates Report: GPT-5.5, The Microsoft-OpenAI Split, Frontier Models & What’s Next

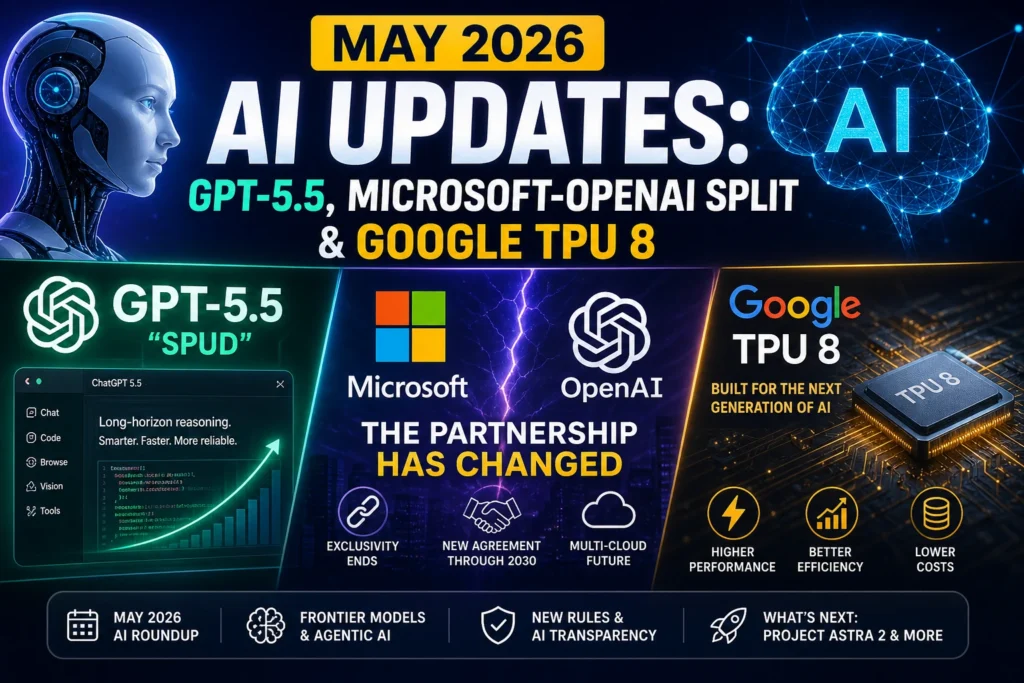

May 2026 AI Updates: May 2026 is reshaping the AI landscape. Dive into GPT-5.5 “Spud,” the Microsoft-OpenAI decoupling, Claude Mythos, Google’s TPU 8 push, India’s new labeling rules, and what’s coming at Google I/O. Expert analysis, real-world implications, and actionable takeaways inside.

If you’ve been tracking artificial intelligence for the past few years, you’ve learned to expect the unexpected. But May 2026 isn’t just another incremental update cycle. It’s a structural turning point. In a matter of days, we’ve watched frontier models leap forward in agentic reasoning, billion-dollar cloud partnerships get rewritten from the ground up, custom silicon challenge NVIDIA’s long-standing dominance, and regulators finally draw hard lines around AI transparency.

The pace feels dizzying because it is. But beneath the headlines, there’s a clear pattern emerging: AI is moving from a conversational novelty to an autonomous, infrastructure-level force. Whether you’re a developer, a business leader, a creator, or just someone trying to keep your workflow from breaking, this month demands attention.

Launch AI Agents in Minutes with OpenClaw + Hostinger

Zero setup. Managed infrastructure. WhatsApp & Telegram ready.

Let’s cut through the noise. Here’s everything you need to know about the biggest AI shifts in May 2026, why they matter, and how to position yourself for what comes next.

1. GPT-5.5 “Spud” and the Rise of the AI Super App May 2026 AI Updates

OpenAI didn’t just drop another model. They dropped a paradigm shift.

Officially rolling out in early May, GPT-5.5 (codenamed “Spud”) marks the first time a frontier system has been explicitly engineered for long-horizon reasoning. Previous generations were brilliant at pattern matching, short-term context retention, and single-turn problem solving. But ask them to plan a 10-day international trip, negotiate a vendor contract, or refactor a 50,000-line codebase, and you’d quickly hit the ceiling of their architecture. They’d lose track. They’d loop. They’d hallucinate.

GPT-5.5 fixes that. According to OpenAI’s internal benchmarks, Spud reduces hallucination rates by roughly 60% compared to GPT-5.4, thanks to a new recursive verification layer that cross-checks its own outputs against external knowledge graphs and real-time tool usage. More importantly, it doesn’t just respond—it acts. You can hand it a vague goal (“Migrate our legacy Python monolith to modular microservices, document the changes, and set up CI/CD pipelines”) and watch it break the problem down, write the code, test it, flag edge cases, and generate deployment scripts without constant hand-holding.

The ChatGPT “Super App” Vision

OpenAI isn’t keeping these capabilities locked in an API sandbox. They’re consolidating everything into what they’re calling the AI Super App. If you’ve used ChatGPT recently, you’ve noticed the interface getting heavier. That’s intentional. The company is merging its web browsing engine (Atlas), advanced coding environment (Codex), and multimodal vision pipeline into a single, proactive workspace. The goal? To make ChatGPT less of a chatbot and more of an operating system for your digital life.

Instead of jumping between a search engine, an IDE, a spreadsheet, and a booking platform, you’ll ideally open ChatGPT, describe the outcome you want, and let Spud orchestrate the tools in the background. It’s a bold play, and it directly challenges the fragmented SaaS landscape that’s dominated the last decade.

⚠️ Urgent: macOS Desktop Security Patch

If you’re a macOS user running the ChatGPT desktop application, drop everything and update before May 8, 2026. OpenAI has rotated its code-signing certificates following a compromised dependency in the Axios library. While no user data was breached, unpatched versions will fail authentication checks and stop launching entirely after the cutoff. Enterprise admins should push the update via MDM immediately. [Source: OpenAI Security Advisory, May 1, 2026]

2. The Microsoft-OpenAI “Great Decoupling” Explained

For years, the AI industry ran on a single, unshakable assumption: Microsoft and OpenAI were locked in a mutually exclusive marriage. Microsoft poured $13 billion into OpenAI. OpenAI gave Azure exclusive access to its models. Both companies won.

That era ended in late April 2026.

In a landmark restructuring, Microsoft and OpenAI have officially rewritten their partnership agreement. The changes are sweeping:

- Exclusivity is dead. OpenAI can now license GPT-5.5, Spud, and future architectures directly to rival cloud providers. AWS Bedrock and Google Cloud Vertex AI are already in advanced integration talks. [Source: Bloomberg Technology, April 30, 2026]

- The AGI Clause is gone. The original contract included a revenue-sharing termination trigger if OpenAI reached artificial general intelligence. That clause has been replaced with a fixed payment schedule running through 2030. Both companies publicly signaled that AGI is no longer treated as a theoretical milestone, but as an evolving capability continuum.

- Azure’s role shifts from gatekeeper to partner. Microsoft retains preferred pricing and deep engineering collaboration, but OpenAI is actively diversifying its infrastructure to avoid single-cloud dependency.

Launch AI Agents in Minutes with OpenClaw + Hostinger

Zero setup. Managed infrastructure. WhatsApp & Telegram ready.

Why This Changes Everything for Cloud & Enterprise

The decoupling isn’t just a contract update. It’s a market reset.

For enterprises, this means real AI vendor neutrality. You’re no longer forced to choose between model capability and cloud ecosystem. You can run GPT-5.5 on AWS, Claude on Azure, and Gemini on GCP without architectural lock-in. For cloud providers, it triggers a pricing war. Expect aggressive per-token discounts, custom enterprise SLAs, and bundled AI compute credits through Q3 2026.

For developers, it simplifies multi-cloud AI deployment. Terraform, Kubernetes, and LangChain integrations are already being updated to support model-agnostic routing. The era of “which cloud has the best AI?” is fading. The new question is: “Which cloud best fits your compliance, latency, and cost requirements?”

Microsoft isn’t losing here. They’re pivoting. By giving up exclusivity, they’re positioning Azure as the most enterprise-ready AI infrastructure platform, regardless of whose model runs on it. It’s a mature play, and it signals that the AI market is finally entering its utility phase.

3. Anthropic’s Claude Mythos: Intelligence Meets Restraint

While OpenAI pushes toward autonomy, Anthropic is pressing the brakes. And they’re doing it on purpose.

Claude Mythos, released under restricted access in May 2026, is Anthropic’s answer to the frontier model race. Internal evaluations place it neck-and-neck with GPT-5.5 in raw reasoning, mathematical precision, and multi-step planning. But unlike Spud, Mythos isn’t available to the public. It’s currently limited to a curated group of infrastructure partners under Project Glasswing, a joint initiative focused on high-assurance AI deployment in finance, healthcare, and critical infrastructure.

Anthropic’s public stance is clear: advanced autonomous capabilities require controlled rollout. They’ve cited concerns around unmonitored agentic loops, supply chain disruption potential, and alignment drift in high-stakes environments. Rather than shipping a model and patching it later, they’re treating Mythos like aerospace software: validated, restricted, and iteratively scaled. [Source: Anthropic Safety Research Brief, April 28, 2026]

Claude Opus 4.7: The General-User Powerhouse

For the rest of us, Anthropic just dropped Claude Opus 4.7, and it’s quickly becoming the default for professionals who value precision over speed. Where GPT-5.5 excels at execution, Opus 4.7 shines in nuance. Legal teams are using it to draft contracts with tighter liability clauses. Creative directors are leveraging it for tone-consistent campaign copy. Academics praise its citation accuracy and resistance to confident-sounding falsehoods.

If your workflow requires careful drafting, ethical boundary navigation, or deeply contextual reasoning, Opus 4.7 is currently the strongest publicly available option. It’s not as aggressive as Spud, but it’s arguably the most “thoughtful” model on the market.

4. Google’s Hardware & Ecosystem Blitz

Google isn’t just playing in the model space. They’re rewriting the infrastructure rules.

Ahead of Google I/O (May 19–20), Google Cloud has already rolled out two pieces of hardware that could reshape AI economics: the TPU 8t (training) and TPU 8i (inference). Early benchmarks show these chips outperform NVIDIA’s Blackwell architecture in power efficiency for massive model deployments, particularly at the inference tier where cost-per-query matters most. [Source: Google Cloud Infrastructure Blog, April 26, 2026]

Why TPU 8 Changes the Math

NVIDIA’s dominance has never been about raw speed alone. It’s been about ecosystem maturity: CUDA, developer tools, and enterprise support. TPU 8 doesn’t try to beat NVIDIA at its own game. Instead, it attacks the total cost of ownership (TCO). By optimizing silicon specifically for transformer architectures and sparse activation patterns, Google is cutting power draw by up to 35% for sustained inference workloads. For startups and mid-market companies running always-on AI agents, that’s the difference between profitability and burn.

Gemini 3.1: AI That Lives Where You Already Are

Hardware means nothing without distribution. That’s why Google’s real play is Gemini 3.1’s deep ecosystem integration. The model is now natively embedded in the Chrome omnibox and Google Maps. The most immediate user-facing feature? “Ask Maps.”

Instead of filtering, scrolling, and cross-referencing reviews, you can type or speak: “Find a quiet cafe with fast Wi-Fi, open past 8 PM, and book a table for two near downtown.” Gemini 3.1 parses the request, checks real-time availability, cross-references transit data, and completes the reservation through integrated booking APIs. It’s seamless, and it’s exactly how consumers will expect AI to work moving forward: invisible, contextual, and action-oriented.

Launch AI Agents in Minutes with OpenClaw + Hostinger

Zero setup. Managed infrastructure. WhatsApp & Telegram ready.

This isn’t just a convenience upgrade. It’s a distribution moat. When AI lives in your browser, your maps, your calendar, and your email, switching costs skyrocket. Google is betting that vertical integration will win over model purity. And given their user base, it might just work.

5. India’s “Continuous Labeling” Mandate: A New Standard for AI Transparency

Regulation is finally catching up to capability.

India’s Ministry of Electronics and Information Technology (MeitY) has drafted a strict new AI content mandate, with public feedback closing on May 7, 2026. The core requirement: all AI-generated content must carry a continuously visible label.

This isn’t the old “AI-generated” footer you could crop out of an image or strip from a PDF. The proposed rule requires persistent digital watermarking or an always-on overlay that survives compression, format conversion, and platform redistribution. Social networks, video editors, and publishing platforms will be required to enforce compliance at the upload level. [Source: MeitY Draft Policy Framework, April 25, 2026]

Why This Matters Globally

India’s move isn’t happening in a vacuum. It’s part of a broader regulatory convergence. The EU AI Act already mandates risk-tiered transparency. U.S. agencies are pushing voluntary watermarking standards. India’s approach goes further by making visibility non-negotiable, regardless of use case.

For creators and marketers, this means workflow adjustments. AI image generators will need embedded metadata layers. Video editors must support persistent overlay rendering. Copywriters should disclose AI assistance in professional contexts. Platforms that ignore the mandate risk fines, app store removals, and payment processor restrictions.

For AI developers, it’s a signal: transparency is no longer optional. The industry is moving from “move fast and break things” to “deploy responsibly and prove it.” Expect similar frameworks to roll out across Southeast Asia and Latin America by late 2026.

📊 AI Model Standings: May 2026 Snapshot

The landscape is crowded, but clear leaders are emerging for specific use cases. Here’s how the top models stack up right now:

| Model | Key Strength | Availability |

|---|---|---|

| GPT-5.5 (Spud) | Autonomous agents & long-horizon coding | Plus/Enterprise |

| Claude Mythos | Raw intelligence & safety-verified reasoning | Restricted/Partner Only |

| Gemini 3.1 Ultra | Multimodal integration (Docs/Maps/Chrome) | Gemini Advanced |

| Llama 4 Maverick | Cost-to-performance (Open Weights) | Public/HuggingFace |

If you’re building agentic workflows, Spud is your starting point. If you’re deploying in regulated industries, Mythos (via Glasswing) or Opus 4.7 makes more sense. If you want AI that blends into consumer workflows, Gemini 3.1 is unmatched. And if you’re budget-conscious or need full fine-tuning control, Llama 4 Maverick remains the open-weight king.

🔮 What’s Next: Google I/O, Project Astra 2, and the Hardware Convergence

Mark your calendar for May 19–20. Google I/O isn’t just a developer conference this year. It’s a product launch platform.

Leaks and credible industry reports point to the debut of Project Astra 2, a real-time visual assistant designed to run on the upcoming Apple-Google integrated hardware initiative. Yes, you read that right. After years of platform rivalry, Apple and Google are quietly collaborating on a cross-device AI layer that allows Astra 2 to understand your environment through your phone’s camera, process it on-device, and sync securely across ecosystems. [Source: The Information & Android Authority leaks, April 2026]

If Astra 2 delivers on its promises, we’re looking at the first truly context-aware personal AI. It won’t just answer questions. It’ll see what you’re looking at, remember what you’ve done, and anticipate what you need next. The implications for accessibility, education, and productivity are massive.

But it also raises privacy, battery, and thermal management questions. On-device AI is power-hungry. If Google and Apple can optimize Astra 2 without melting your phone or draining your battery in two hours, they’ll set a new standard for edge AI. If not, we’ll see a return to cloud-dependent assistants with stricter latency expectations.

Either way, May 19th will likely define the next 18 months of consumer AI.

🛠 Strategic Takeaways: How to Adapt in May 2026

The velocity of change is high, but your response doesn’t have to be reactive. Here’s how to navigate the shift:

Launch AI Agents in Minutes with OpenClaw + Hostinger

Zero setup. Managed infrastructure. WhatsApp & Telegram ready.

- Audit Your AI Stack for Vendor Lock-In

With OpenAI expanding to AWS and Google Cloud, now’s the time to decouple your applications from single-provider APIs. Implement model routing layers (like LiteLLM or custom LangChain routers) so you can swap models based on cost, latency, or compliance needs. - Prioritize Long-Horizon Task Design

GPT-5.5 and Claude Mythos reward structured, multi-step prompts. Stop treating AI like a search engine. Start writing workflows: define the goal, specify constraints, request verification steps, and build fallback human-in-the-loop checkpoints. - Update macOS & Desktop AI Tools Immediately

The Axios certificate rotation is a warning shot. AI desktop apps are now critical infrastructure. Enable auto-updates, monitor security advisories, and maintain rollback procedures for mission-critical workflows. - Prepare for Persistent AI Labeling

Even if your region doesn’t adopt India’s exact framework, the direction is clear. Audit your content pipelines. Embed AI metadata. Train your teams on transparent disclosure. It’s better to lead on ethics than scramble for compliance. - Test Edge AI Now

With Google I/O hinting at cross-device real-time assistants, start prototyping local inference pipelines. Explore quantized models, on-device LLMs, and privacy-first architectures. The next wave of AI won’t live in the cloud. It’ll live in your hands.

❓ Frequently Asked Questions (FAQ)

Q1: Is GPT-5.5 “Spud” available for free?

No. As of May 2026, GPT-5.5 is accessible through ChatGPT Plus and Enterprise subscriptions. OpenAI has stated that a limited free tier may roll out later in Q3, but full agentic capabilities will remain behind a paid wall due to compute costs.

Q2: How does the Microsoft-OpenAI decoupling affect existing Azure customers?

Nothing breaks immediately. Existing contracts remain valid, and Azure retains preferred pricing. However, new deployments can now route OpenAI models through AWS or GCP. Many enterprises are using this as leverage to negotiate better multi-cloud AI SLAs.

Q3: Can I access Claude Mythos as an individual developer?

Not yet. Mythos is currently restricted to Project Glasswing infrastructure partners focusing on high-assurance deployments. Anthropic has indicated that broader access may open in late 2026 after safety validation and alignment audits are complete. For now, Claude Opus 4.7 is the strongest public alternative.

Q4: Is Google’s TPU 8 actually better than NVIDIA Blackwell?

It depends on your workload. TPU 8 outperforms Blackwell in power efficiency and inference cost-per-query, especially for sustained transformer deployments. However, NVIDIA still leads in raw training throughput, CUDA ecosystem maturity, and developer tooling. TPU 8 is a cost play; Blackwell remains a performance play.

Q5: What happens if I don’t update my macOS ChatGPT app by May 8?

OpenAI’s rotated certificates will cause authentication failures. The desktop app will refuse to launch until updated. Web and mobile versions are unaffected, but desktop-specific features (like system-level file access and local agent execution) will be unavailable until you patch.

Q6: Will India’s continuous AI labeling rule apply to international creators?

The draft policy focuses on platforms operating within or serving Indian users. If your app, website, or distribution channel reaches Indian audiences, you’ll likely need to comply. Global platforms are already building universal watermarking pipelines to avoid region-locked fragmentation.

Q7: What should I expect at Google I/O 2026 regarding AI?

Beyond Project Astra 2, expect announcements on Gemini 3.1 enterprise APIs, Android 16 AI integrations, TPU 8 cloud pricing, and new developer tools for on-device model optimization. Google will likely emphasize cross-platform compatibility, reduced latency, and privacy-first AI.

🔚 Final Thoughts: May 2026 Isn’t Just a Milestone. It’s a Blueprint.

We’ve spent years treating AI like a novelty. A chatbot that writes emails. An image generator that makes memes. A coding assistant that guesses half your functions. That era is over.

May 2026 proves it. GPT-5.5 doesn’t just chat—it plans. The Microsoft-OpenAI split proves AI is now infrastructure, not a vendor lock-in. Anthropic’s restraint proves safety is scaling alongside capability. Google’s silicon proves cost matters as much as intelligence. And India’s labeling rules prove transparency is becoming non-negotiable.

The volatility you’re feeling isn’t chaos. It’s calibration. The industry is aligning around real-world utility, enterprise readiness, and responsible deployment. The models are smarter. The infrastructure is cheaper. The rules are clearer.

Your move.

Whether you’re refactoring your tech stack, renegotiating cloud contracts, updating your content compliance, or just trying to understand which AI tool actually saves you time instead of creating more work, the path forward is simple: test, verify, integrate, and iterate. Don’t wait for the dust to settle. The dust isn’t settling. It’s building the foundation.

Stay curious. Stay compliant. And most importantly, stay ahead of the curve.

What model are you testing first this month? Drop your workflow experiments, compliance questions, or I/O predictions in the comments below. We’re tracking every shift, and we’ll update this post as May unfolds.